Can We Measure Software Slop? An Experiment

In this article, I propose a definition of software slop based on human attention (slop = code that hasn't been reviewed or verified) and sketch out a way to estimate how "sloppy" a piece of software is.

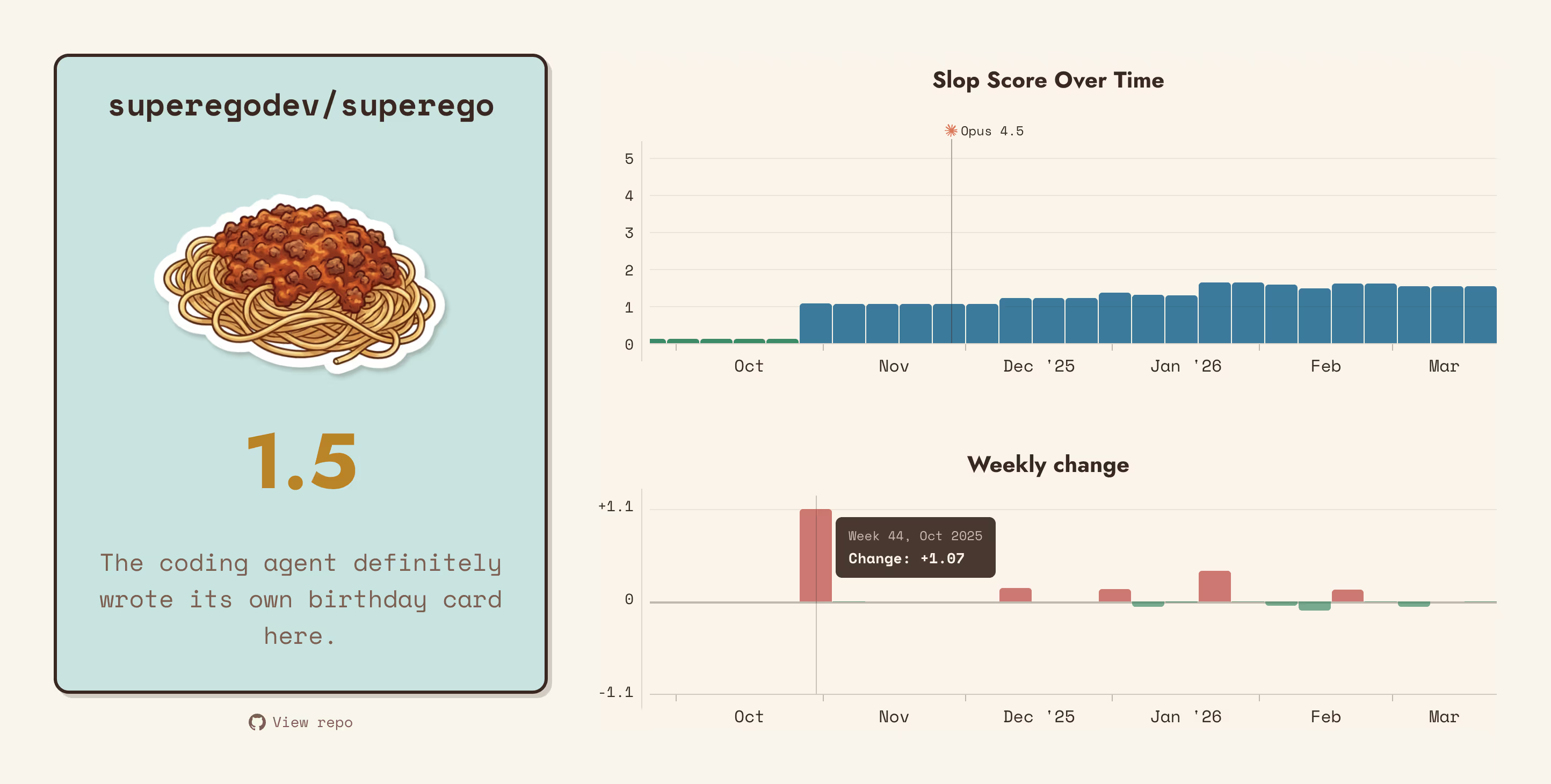

I put it to the test with Slop-O-Meter, an experimental tool that analyzes public GitHub repos and assigns them a sloppiness score. I then discuss the results of the tool, which are not very reliable, but interesting nonetheless.

A couple of weeks back, I shared on social media an app I’ve been working on for the past year, and got served this lovely piece of feedback:

If it wasn’t a slopware, I would have liked it.

The commenter was off the mark, but I can definitely understand where they’re coming from. “Thanks” to coding agents, in the past few months there’s been a tsunami of new software, and forums have been inundated by self-promotional posts I do see the irony of saying this. When I go on holiday I also complain that there are too many tourists. .

Mind you, I actually think it’s great that AI has lowered the barrier to being able to program and take more control of our computers. On the other hand, I too don’t want to invest my time into apps that are the result of a couple of days of prompting, with very little thought and commitment behind them.

So I started wondering: is there a way to detect when an app is slop? Can the “slop level” be somehow measured? Can I redeem my poor app’s honor?

A Definition Of Slop

As I see it, software slop is not about quality. LLMs can (and do) write shitty software, but so can humans. I think that, instead, slop is all about human attention. More specifically, human attention from someone who has some sort of stake in the thing being made.

LLM-generated code is slop when it has not been given attention; i.e., it has not been reviewed or verified by a human. Conversely, it stops being slop once a human carefully goes over it, edits it to match their quality standards, and verifies it works.

It’s all about having that seal of approval that tells us that someone invested said “yes, this thing’s all good, you can trust it” That’s why, pre-AI, slop was not that big of a problem: human attention was intrinsic to the process of making software. Though projects still could (and did) get sloppy when they were built by people who weren’t really invested in them. .

So, if we take a software product of a certain scope and size, we should be able to tell how “sloppy” it is by looking at two quantities:

- Attention cost: how much attention is generally required to give that seal of approval.

- Attention spent: how much attention the software actually got.

A simple formula would then give us a score for the sloppiness:

A Sloppy Experiment

Are these two quantities measurable?

Attention cost should somewhat correlate to lines of code. I mean, adding one line of code costs attention. Not all lines are priced the same, of course, but definitely more lines = higher cost, so the total LOC count could be an indirect measure of attention cost.

Attention spent is more difficult to gauge. If developers carefully tracked how much time they spent architecting, writing, fixing, and testing, then we could tally it all up and get a number. Fortunately, we don’t usually have to do that, but we do leave crumbs signaling that some time was spent: git commits, pull request comments, issue replies, etc.

For open-source projects, all this information is available on GitHub. So, as one does these days, I fired up Claude and asked it to slop me together an app that collects all the data we need and spits out a score from 0 to 5.

You can try it yourself at Slop-O-Meter.dev: just paste in a public repo URL and wait for the results.

The Scoring Algorithm

As I said above, I use lines of code to estimate attention cost, but of course

I can’t just do wc -l **/* and call it a day.

First of all I filter out non-code files (READMEs, config files, etc.) and other stuff like vendored dependencies and committed artifacts. I then weigh the rest according to two main criteria:

- The type of file, reasoning that it costs more to write a line of JavaScript than a line of CSS.

- The size of the codebase at the time the file Line, actually. was written. The idea here is that adding code to a larger codebase is more difficult and requires more attention.

As for attention spent, I treat human-made commits and GitHub comments (on PRs, issues, etc.) as “signals of attention”, with each signal adding a certain amount of hours to the total expenditure.

Signals are weighed as well: attention from a veteran of the project counts more than the attention of a drive-by contributor.

I do these measurements on a week-by-week basis: weeks that see an attention deficit increase the slop score. Weeks with an attention surplus lower it.

This is a simplified description, of course, but you can find the more detailed spec here. (Note: it’s still a very primitive algorithm.)

So? Can It Measure Slop?

Not really, unfortunately.

Or rather, for many repos it seems to work, but for others it utterly fails, making it difficult to trust the results.

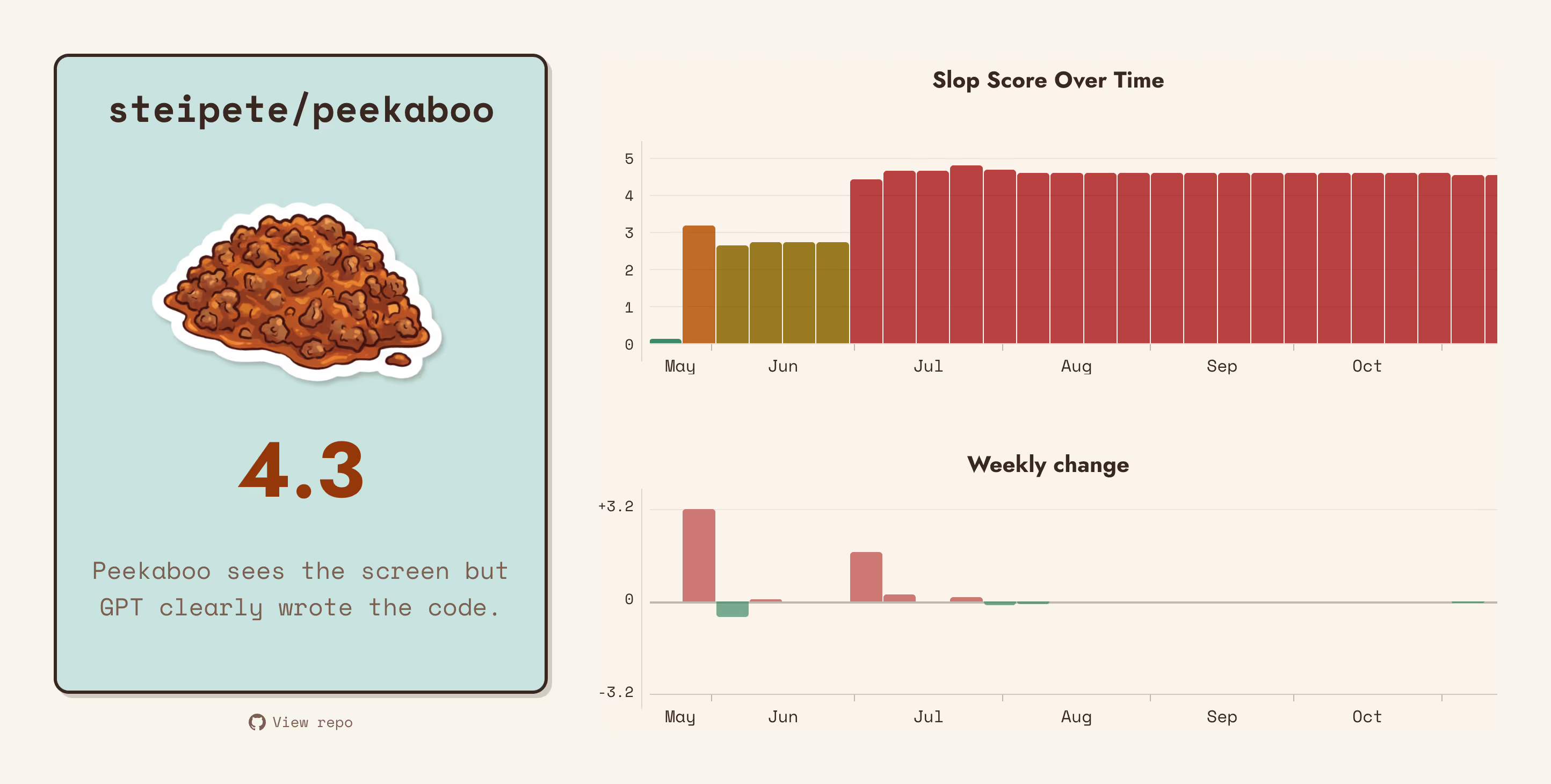

If we look for example at repos by @steipete, proud vibe coder, they all get consistently high scores, suggesting that the detector is onto something:

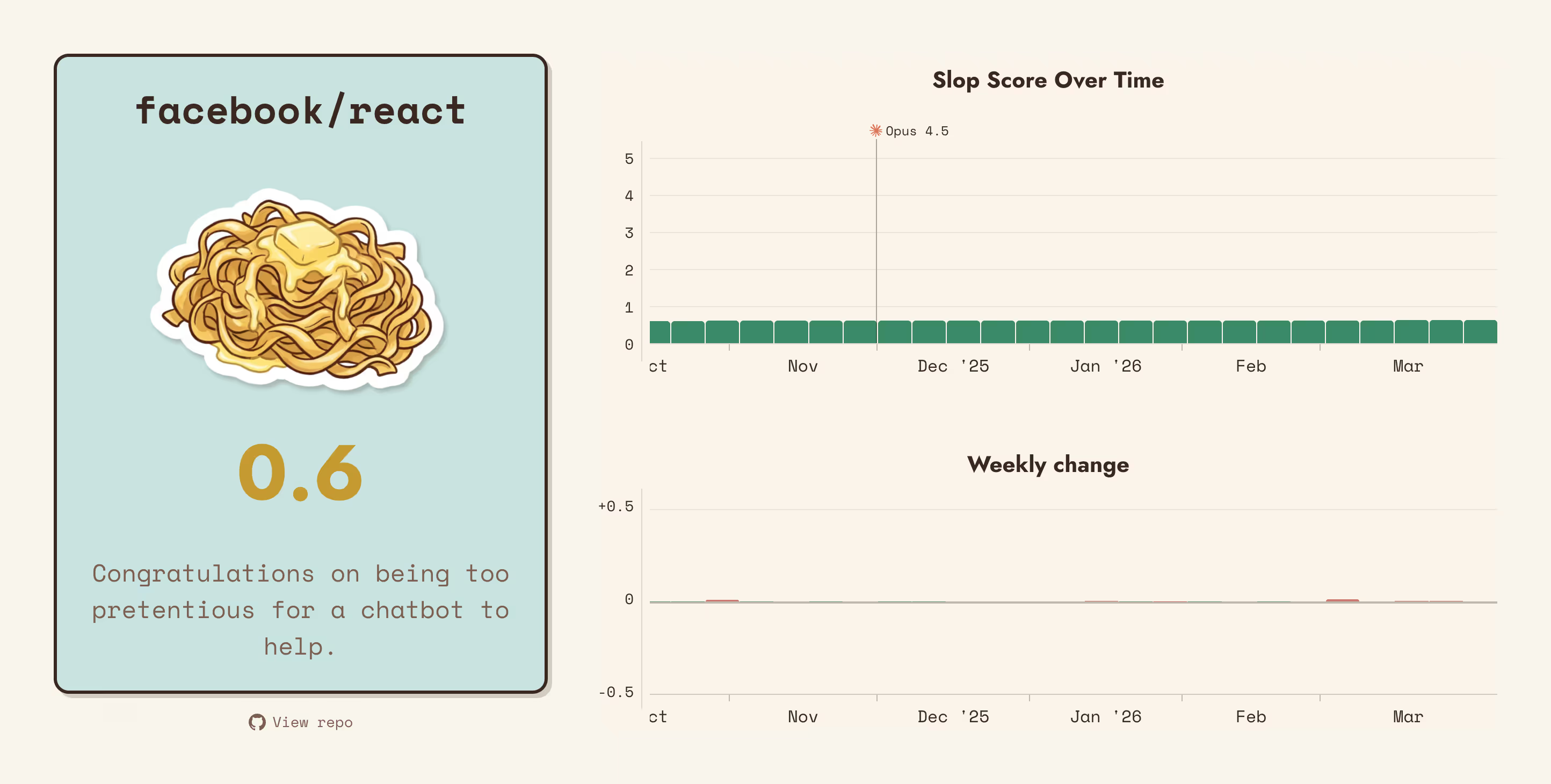

Meanwhile, React and other similarly tightly-run projects only show traces of slop:

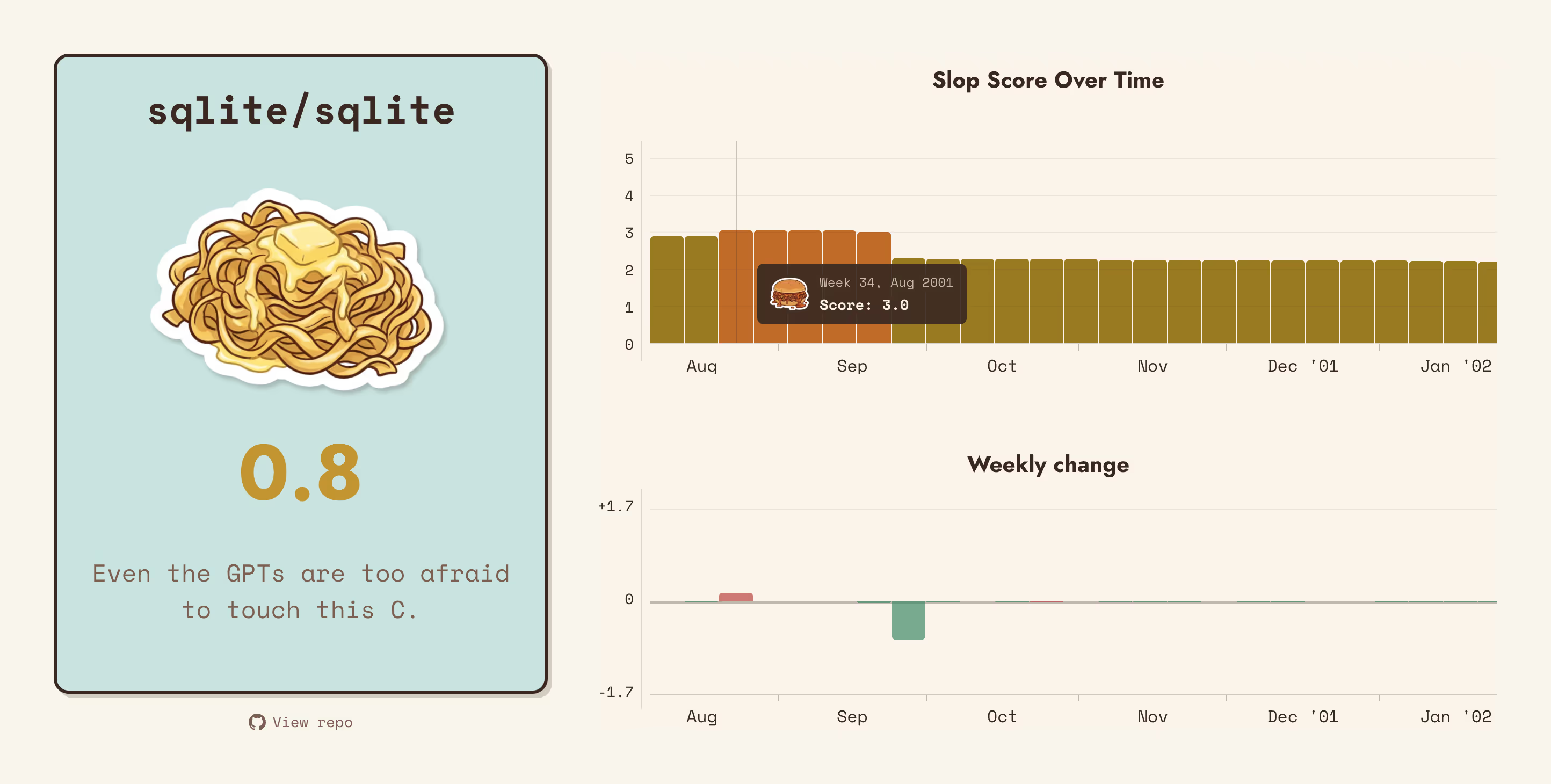

But there are egregious false positives:

The current score for SQLite is plausible, but if we look at historical scores, we get an unbelievable 3.0 in August 2001. Talk about a project that’s ahead of its time!

The issue here is that SQLite is developed on a custom version control system (Fossil), and the git repo is only a mirror which gets occasional code drops but no other activity. This is what vibe development looks like, so the algorithm flags it.

We could discount SQLite as a one-off exception, but unfortunately other legitimate development workflows exhibit a similar pattern. For example, development behind closed doors, or doing infrequent big commits rather than frequent small ones.

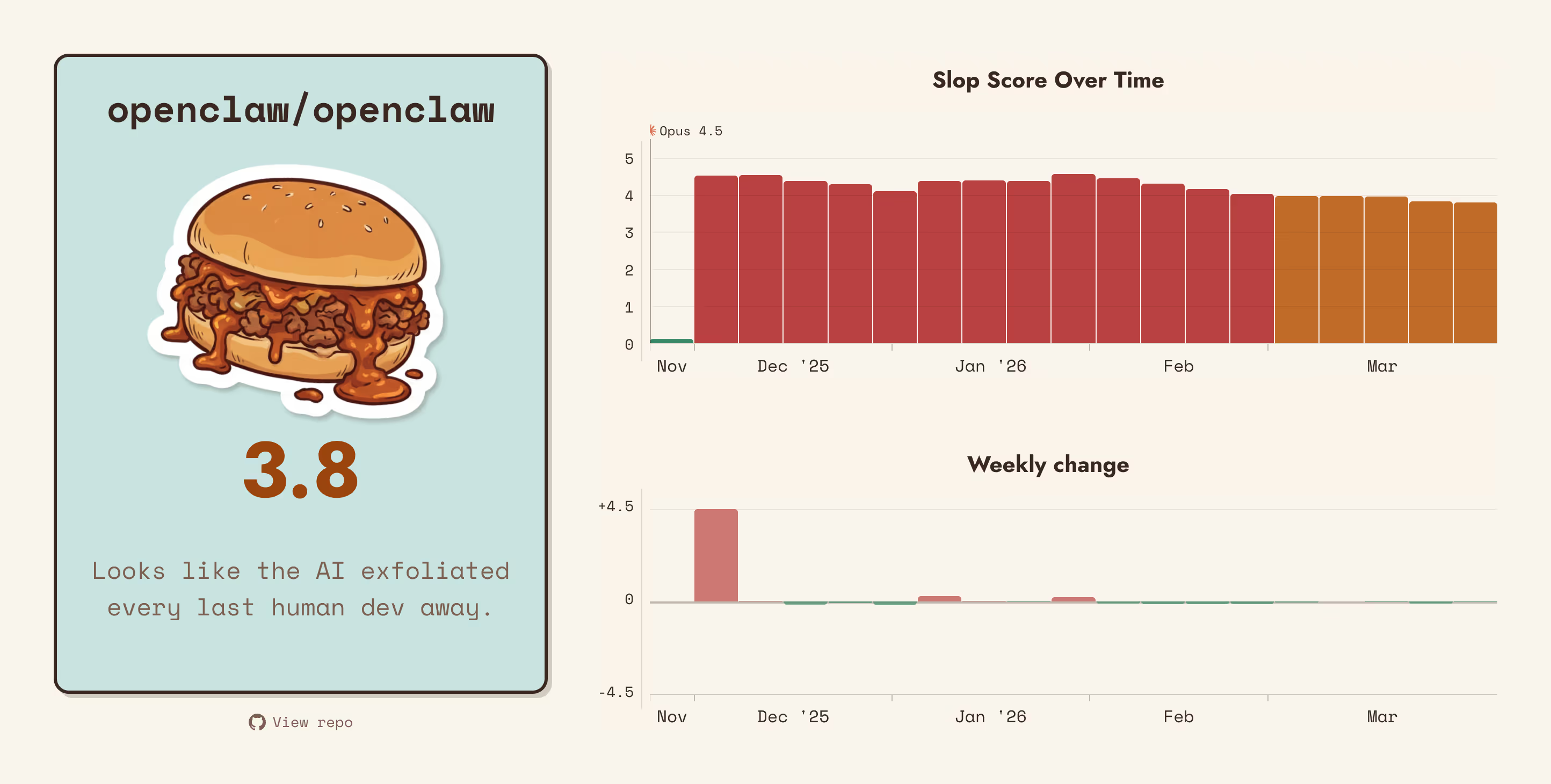

There are also some other minor issues. Take my app that was besmirched as slopware:

I could even accept 1.5 as a reasonable score. After all, since Opus 4.5 I have been using AI a lot more and, while I carefully review every morsel of slop and edit it to match my fussy tastes, it’s reasonable to assume some of it escaped my inspection.

However, the score is not building up gradually since the release of the model (end of Nov 2025). Instead it jumps by a whole point sometime in October, when I merged a huge PR I had worked on night and day without much assistance at all.

To some extent, code produced in a rush to push out some new feature could also be considered slop. But that’s my slop! 100% organic, and not exactly what I would like to measure.

Is There Hope For The Algorithm?

Maybe. Claude told me that the core idea is brilliant and insightful, so I really ought to explore it further.

I think I can make some improvements if I collect more attention signals and calibrate them better. For example, at the moment the “attention weight” of a commit is the same for all repos, but I could try to infer it from the repo’s history, so I don’t penalize repos where contributors prefer larger, more infrequent commits.

Ideas for the future. In the meantime, you can find the code for the experiment on GitHub, and if you too have ideas on how to improve the measurement, just open a PR and you’ll get (pending my approval) a preview environment where you can test your theories.